Body Language for VUIs

Exploring Gestures to Enhance Interactions with Voice User Interfaces

Published in ACM DIS (Designing Interactive Systems) 2024

Introduction

Natural Language User Interfaces (NLUIs) enable seamless interaction through text and voice with AI agents. Advances in large language models (LLMs), such as GPT-4 and Qwen3, along with multi-modal models like GPT-4-Vision, have expanded NLUI capabilities to include visual input. Voice User Interfaces (VUIs), a key subset of NLUIs, are widely used in personal assistants and smart home devices. With the rise of wearable technology and multi-sensor systems, VUIs can now interpret speech, surroundings, and even gestures, opening new possibilities for richer interactions. This research focuses on future multi-modal VUIs, capable of understanding natural language, perceiving environments, and detecting body movements. While prior work has explored gestures alongside voice interfaces, there is limited systematic research on how gestures can enhance the interaction loop with VUIs that understand speech, context, and movement. This project explores the potential of gesture-based interactions in multi-modal VUIs, aiming to inform more intuitive and natural user experiences.

Research Questions & Process

- RQ1 - Patterns: How do users interact with multimodal VUIs with explict and implict gestures?

- RQ2 - Preferences: What are user preferences for intercting with multimodal VUIs in different conditions?

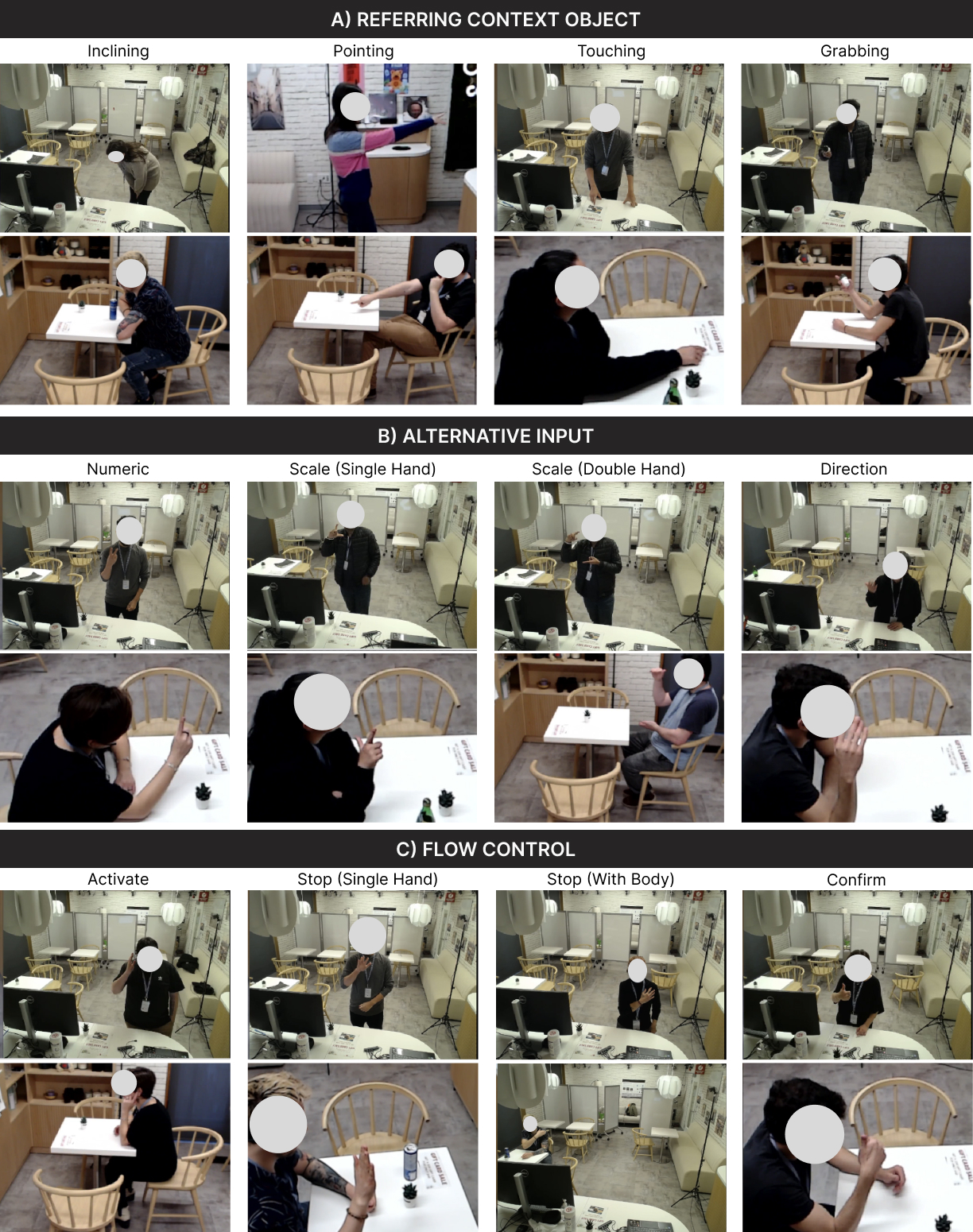

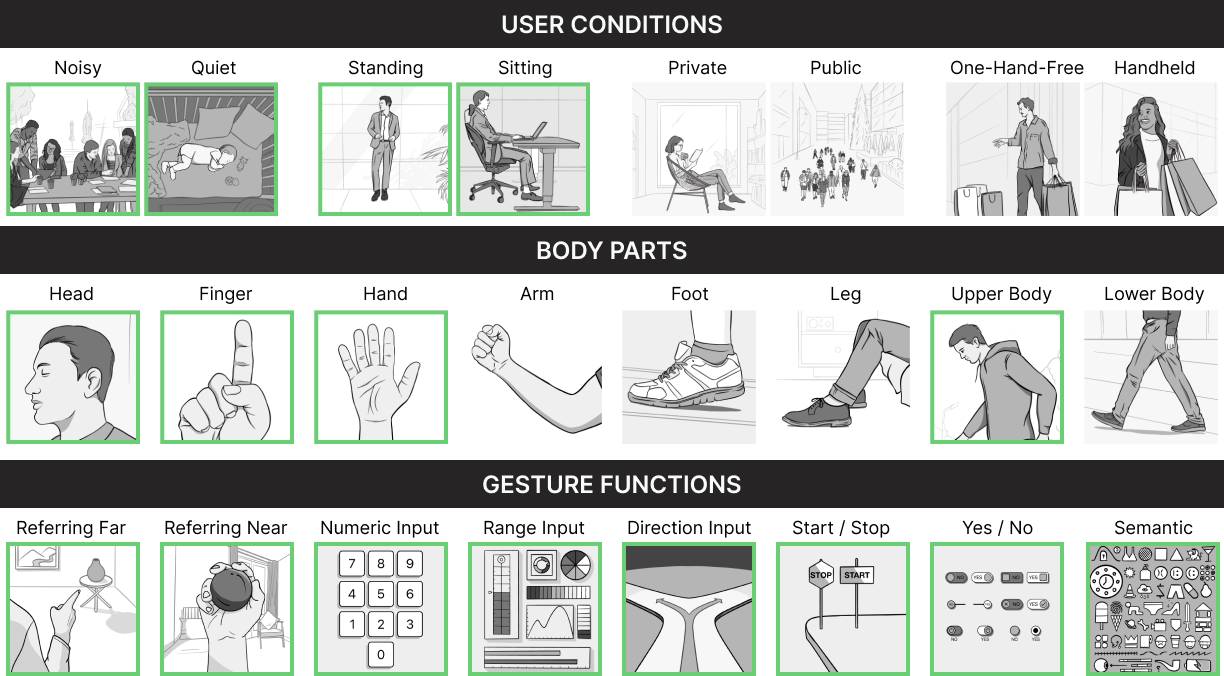

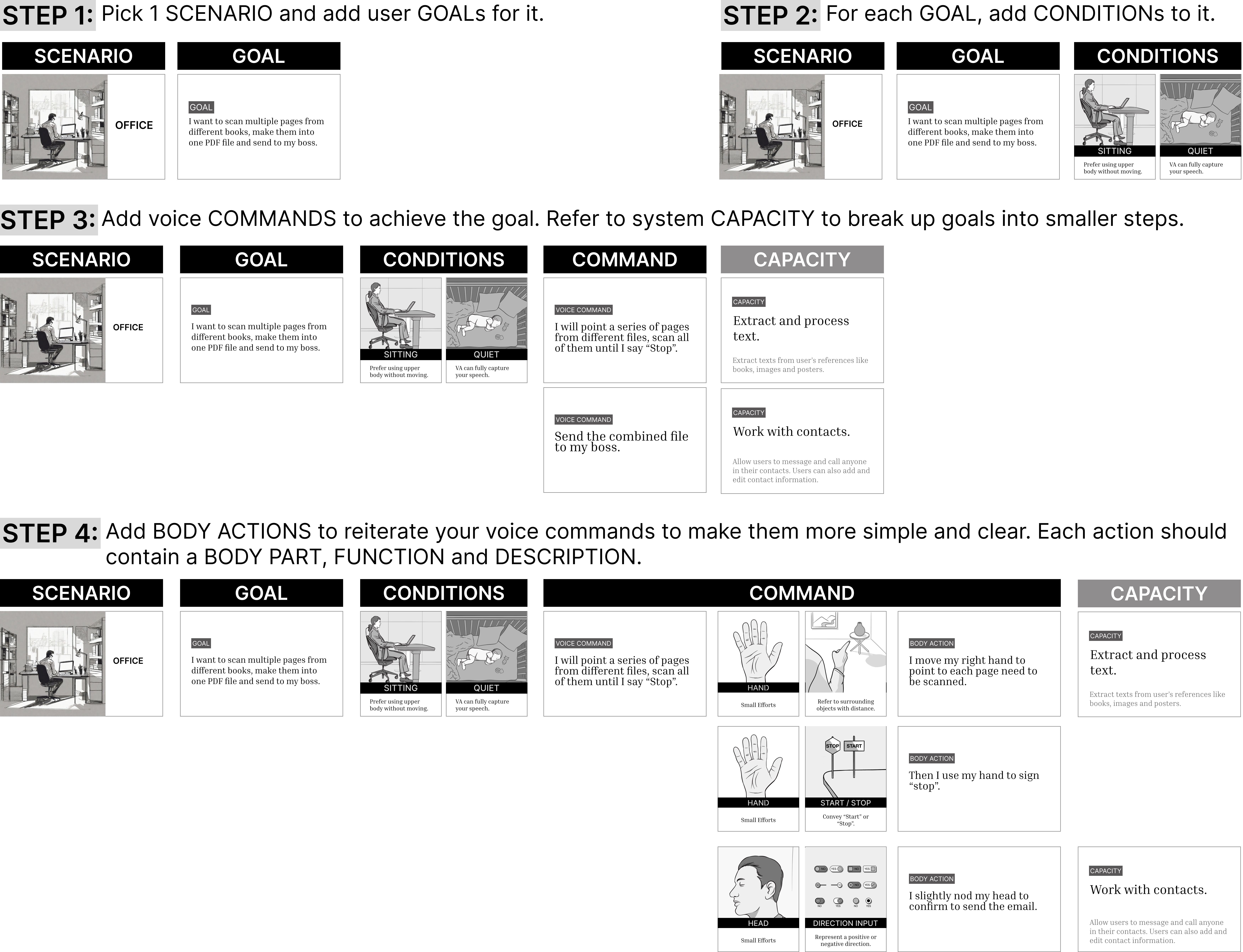

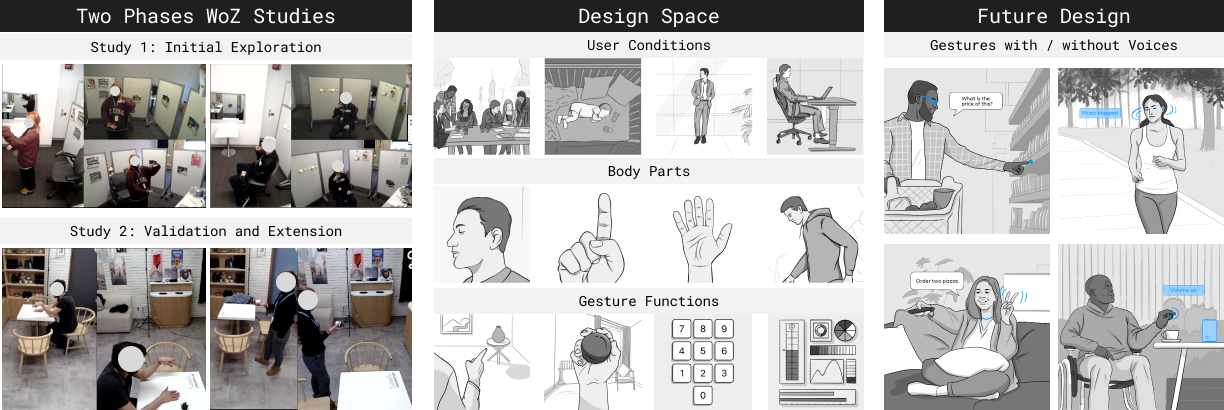

We conducted a two-phase Wizard-of-Oz (WoZ) study to explore how implicit and explicit gestures can enhance interactions with multimodal VUIs. In Phase 1, six participants engaged in six simulated VUI scenarios based on common tasks from existing literature. Our goals were to observe and document typical gestures and identify recurring patterns of gesture-intention mapping, forming an initial design space. Phase 2 involved a staged cafe environment with 12 participants, where we validated and refined the gesture-based interaction design space, while also gathering user preferences on multimodal VUIs across different tasks and environments.

Research Contribution

- Empirical insights into users’ opinions, preferences and influential factors for multimodal VUIs with voice and gestures;

- A design space for gesture interaction in multi-modal VUIs, with demonstrations showcasing future multimodal VUI interactions.